Automated GPU Health Monitoring with NVIDIA NVSentinel on the Rafay Platform

GPU clusters are expensive and GPU failures are costly. In modern AI infrastructure, organizations operate large fleets of NVIDIA GPUs that can cost tens of thousands of dollars each. When a GPU develops a hardware fault (e.g. a double-bit ECC error, a thermal throttle, or a silent data corruption event), the consequences ripple outward: training jobs fail hours into a run, inference latency spikes, and expensive hardware sits idle while engineers scramble to diagnose the root cause.

Traditional monitoring catches these problems eventually, but rarely fixes them. Diagnosing and remediating GPU faults still requires deep expertise, and remediation timelines are measured in hours or days. For organizations running AI workloads at scale — and especially for GPU cloud providers who must deliver uptime SLAs to their tenants — this gap between detection and resolution translates directly into SLA breaches, lost revenue, and eroded customer trust.

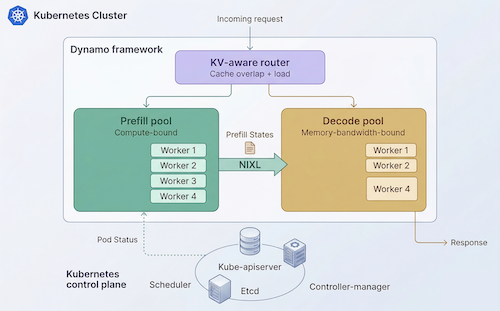

NVIDIA's answer to this challenge is NVSentinel — an open-source, Kubernetes-native system that continuously monitors GPU health and automatically remediates issues before they disrupt workloads.

In this blog, we describe how Rafay integrates with NVSentinel enabling GPU cloud operators and enterprises to deploy intelligent GPU fault detection and self-healing across their entire fleet — consistently, repeatably, and at scale.